A couple of years in the past, a consumer requested me to coach a content material AI to do my job. I used to be in control of content material for a e-newsletter despatched to greater than 20,000 C-suite leaders. Every week, I curated 20 well-written, subject-matter-relevant articles from dozens of third-party publications.

However the consumer insisted that he wished the content material AI to choose the articles as an alternative, with the final word aim of absolutely automating the e-newsletter.

I used to be legitimately curious if we may do it and the way lengthy it might take. For the following 12 months, I labored with a enterprise accomplice and an information scientist to deconstruct what makes articles “good” and “fascinating.” Our finish end result was… mediocre.

The AI may floor articles that have been much like ones the viewers had engaged with up to now, reducing down the time I wanted to curate content material by about 20 %. Seems, there was a lot we may educate an AI about “good” writing (energetic sentences, various verbs), however we couldn’t make it good — which is one other manner of claiming we couldn’t educate it to acknowledge the ineffable nature of a contemporary thought or a dynamic manner of speaking about it.

Finally my consumer pulled the plug on the AI undertaking and finally on the e-newsletter itself. However I’ve been eager about that have over the previous few months as massive language fashions (LLMs) like GPT-3 by OpenAI have gained broader mainstream consideration.

I’m wondering if we might have been extra profitable immediately utilizing an API into GPT-3?

GPT-3 is the inspiration of extra acquainted merchandise like ChatGPT and Jasper, which have a formidable skill to know language prompts and craft cogent textual content at lightning velocity on virtually any matter.

Jasper even claims it permits groups to “create content material 10X sooner.” However the problematic grammar of getting 10X sooner at one thing (I feel they imply it takes one-tenth of the time?) highlights the destructive flip aspect of content material AI.

I’ve written concerning the superficial substance of AI-generated content material and the way these instruments typically make stuff up. Spectacular as they’re when it comes to velocity and fluency, the massive language fashions immediately don’t assume or perceive the best way people do.

However what in the event that they did? What if the present limitations of content material AI — limitations that hold the pen firmly within the fingers of human writers and thinkers, similar to I held onto in that e-newsletter job — have been resolved? Or put merely: What if content material AI was truly good?

Let’s stroll by means of a couple of methods by which content material AI has already gotten smarter, and the way content material professionals can use these content material AI advances to their benefit.

5 Methods Content material AI Is Getting Smarter

To know why content material AI isn’t actually good but, it helps to recap how massive language fashions work. GPT-3 and “transformer fashions” (like PaLM by Google or AlexaTM 20B by Amazon) are deep studying neural networks that concurrently consider the entire information (i.e., phrases) in a sequence (i.e., sentence) and the relationships between them.

To coach them, the builders at Open.ai, within the case of GPT-3, used net content material, which supplied way more coaching information with extra parameters than earlier than, enabling extra fluent outputs for a broader set of purposes. Transformers don’t perceive these phrases, nonetheless, or what they discuss with on the planet. The fashions can merely see how they’re typically ordered in sentences and the syntactic relationship between them.

As a consequence, immediately’s content material AI works by predicting the following phrases in a sequence based mostly on hundreds of thousands of comparable sentences it has seen earlier than. That is one motive why “hallucinations” — or made-up info — in addition to misinformation are so widespread with massive language fashions. These instruments are merely creating sentences that appear like different sentences they’ve seen of their coaching information. Inaccuracies, irrelevant info, debunked info, false equivalencies — all of it — will present up in generated language if it exists within the coaching content material.

And but, these are usually not essentially unsolvable issues. In actual fact, information scientists have already got a couple of methods to handle these points.

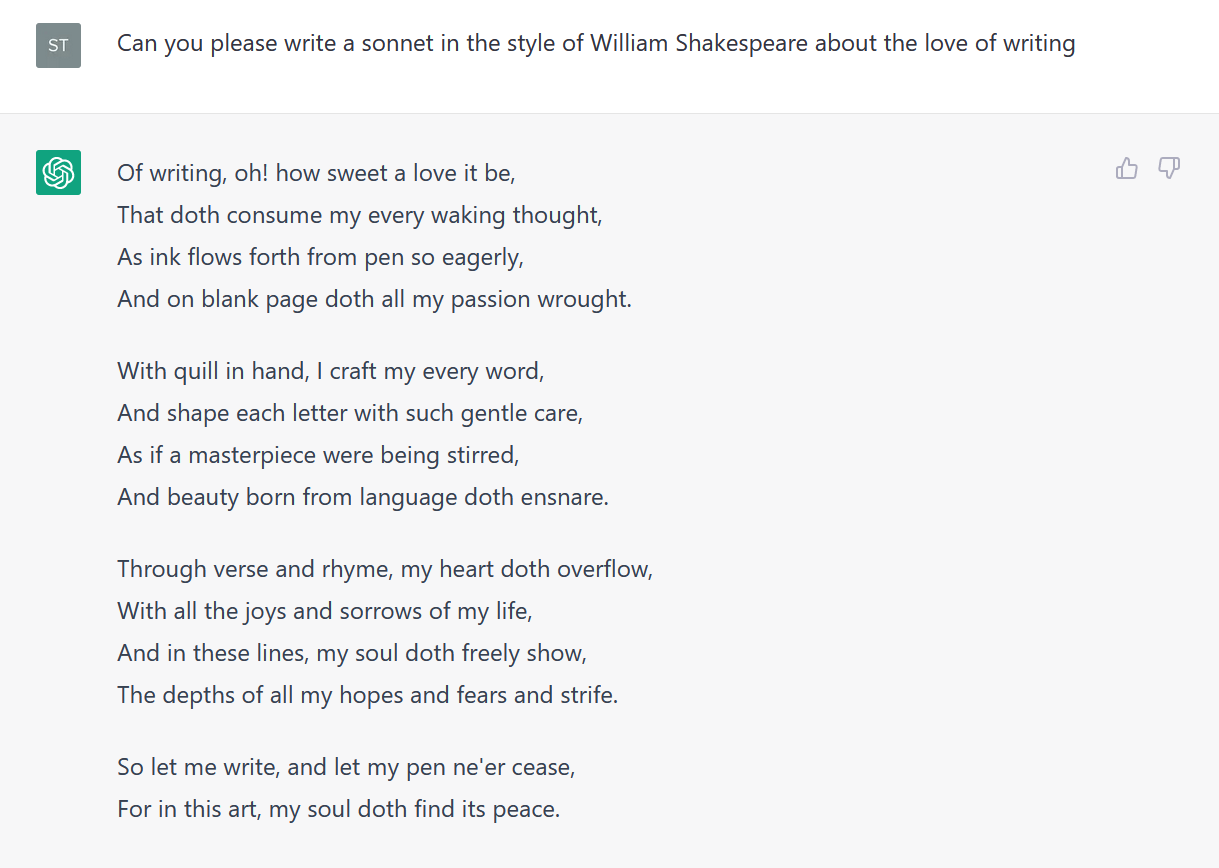

Resolution #1: Content material AI Prompting

Anybody who has tried Jasper, Copy.ai, or one other content material AI app is aware of prompting. Mainly, you inform the device what you wish to write and typically the way you wish to write it. There are easy prompts — as in, “Record some great benefits of utilizing AI to write down weblog posts.”

Prompts will also be extra refined. For instance, you’ll be able to enter a pattern paragraph or web page of textual content written based on your agency’s guidelines and voice, and immediate the content material AI to generate topic traces, social copy, or a brand new paragraph in the identical voice and utilizing the identical type.

Prompts are a first-line methodology for setting guidelines that slender the output from content material AI. Maintaining your prompts targeted, direct, and particular can assist restrict the possibilities that the AI will generate off-brand and misinformed copy. For extra steerage, try AI researcher Lance Elliot’s 9 guidelines for composing prompts to restrict hallucinations.

Resolution #2: “Chain of Thought” Prompting

Contemplate how you’d clear up a math downside or give somebody instructions in an unfamiliar metropolis with no avenue indicators. You’ll most likely break down the issue into a number of steps and clear up for every, leveraging deductive reasoning to seek out your solution to the reply.

Chain of thought prompting leverages an analogous technique of breaking down a reasoning downside into a number of steps. The aim is to prime the LLM to supply textual content that displays one thing resembling a reasoning or commonsense pondering course of.

Scientists have used chain of thought methods to enhance LLM efficiency on math issues in addition to on extra complicated duties, equivalent to inference — which people mechanically do based mostly on their contextual understanding of language. Experiments present that with chain of thought prompts, customers can produce extra correct outcomes from LLMs.

Some researchers are even working to create add-ons to LLMs with pre-written, chain of thought prompts, in order that the typical consumer doesn’t must discover ways to do them.

Resolution #3: Fantastic-tuning Content material AI

Fantastic-tuning entails taking a pre-trained massive language mannequin and coaching it to meet a particular process in a particular area by exposing it to related information and eliminating irrelevant information.

A fine-tuned information language mannequin ideally has all of the language recognition and generative fluency of the unique however focuses on a extra particular context for higher outcomes. Codex, the OpenAI by-product of GPT-3 for writing pc code, is a fine-tuned mannequin.

There are tons of of different examples of fine-tuning for duties like authorized writing, monetary studies, tax info, and so forth. By fine-tuning a mannequin utilizing copy on authorized instances or tax returns and correcting inaccuracies in generated outcomes, a corporation can develop a brand new device that may reliably draft content material with fewer hallucinations.

If it appears implausible that these government-driven or regulated fields would use such untested know-how, think about the case of a Colombian choose who reportedly used ChatGPT to draft his resolution temporary (with out fine-turning).

Resolution #4: Specialised Mannequin Improvement

Many view fine-tuning a pre-trained mannequin as a quick and comparatively cheap solution to construct new fashions. It’s not the one manner, although. With sufficient finances, researchers and know-how suppliers can leverage the methods of transformer fashions to develop specialised language fashions for particular domains or duties.

For instance, a gaggle of researchers working on the College of Florida and in partnership with Nvidia, an AI know-how supplier, developed a health-focused massive language mannequin to judge and analyze language information within the digital well being data utilized by hospitals and medical practices.

The end result was reportedly the largest-known LLM designed to judge the content material in medical data. The staff has already developed a associated mannequin based mostly on artificial information, which alleviates privateness worries from utilizing a content material AI based mostly on private medical data.

Resolution #5: Add-on Performance

Producing content material is usually half of a bigger workflow inside a enterprise. So some builders are including performance on prime of the content material for a larger value-add.

For instance, as referenced within the part about chain of thought prompts, researchers try to develop prompting add-ons for GPT-3 in order that on a regular basis customers don’t need to discover ways to immediate effectively.

That’s only one instance. One other comes from Jasper, which just lately introduced a set of Jasper for Enterprise enhancements in a transparent bid for enterprise-level contracts. These embody a consumer interface that lets customers outline and apply their group’s “model voice” to all of the copy they create. Jasper has additionally developed bots that enable customers to make use of Jasper inside enterprise purposes that require textual content.

One other resolution supplier known as ABtesting.ai layers net A/B testing capabilities on prime of language era to check totally different variants of net copy and CTAs to establish the best performers.

Subsequent steps for Leveraging Content material AI

The methods I’ve described to this point are enhancements or workarounds of immediately’s foundational fashions. Because the world of AI continues to evolve and innovate, nonetheless, researchers will construct AI with skills nearer to actual pondering and reasoning.

The Holy Grail of “synthetic era intelligence” (AGI) — a type of meta-AI that may fulfill quite a lot of totally different computational duties — remains to be alive and effectively. Others are exploring methods to allow AI to have interaction in abstraction and analogy.

The message for people whose lives and passions are wrapped up in content material creation is: AI goes to maintain getting smarter. However we will “get smarter,” too.

I don’t imply that human creators attempt to beat an AI on the type of duties that require huge computing energy. With the arrival of LLMs, people received’t write extra nurture emails and social posts than a content material AI anymore.

However in the intervening time, the AI wants prompts and inputs. Consider these because the core concepts about what to write down. And even when a content material AI surfaces one thing new and unique, it nonetheless wants people who acknowledge its worth and elevate it as a precedence. In different phrases, innovation and creativeness stay firmly in human fingers. The extra time we spend utilizing these expertise, the broader our lead.

Be taught extra about content material technique each week. Subscribe to The Content material Strategist e-newsletter for extra articles like this despatched on to your inbox.

Picture by

PhonlamaiPhoto